Correlating AWS S3 Breaches

5 ways to monitor activity from S3 buckets, a brief history of S3, and common attacker techniques for dumping data from them.

Welcome to Detection at Scale, a weekly newsletter for SecOps practitioners covering detection engineering, cloud infrastructure, the latest vulns/breaches, and more. Enjoy!

Misconfigured AWS S3 buckets remain a reliable target for attackers and show no sign of slowing down. Given the challenges with account sprawl, highly permissive access, and insufficient monitoring (when you need it most), we should all empathize with those tasked with securing cloud environments. While keeping cloud resources secure by default is ideal, security teams can layer on logging to ensure access to S3 data is authorized and our security controls work as expected. In this post, we’ll break down how to log requests and modifications to S3 buckets, review common techniques seen in the wild by attackers to target them and examine sample correlations you can run to detect the early signs of a breach.

If you are interested in the baseline concepts of Correlation, check out the blog below!

All Roads Lead to S3

S3 is the home to the world’s most valuable data and is heavily used by a massive customer base. AWS launched S3 (the Simple Storage Service) in 2006, dubbing it “the storage for the internet.” Since then, it has remained one of the most resilient cloud services and is a key part of architectures designed for data processing, storage, and analytics. As the world continues to become more data and AI-oriented, S3 plays a major role as the backing store for data lakes, housing both structured and unstructured data.

S3 was introduced as on-premise workloads were migrating to the cloud:

“Amazon S3 frees developers from worrying about where they are going to store data, if it will be secure, if it will be available when they need it, the costs associated with server maintenance, or whether they have enough storage available.” - Andy Jassy1

S3’s massive adoption made it a prime target for attackers. When S3 buckets are compromised, dubbing them "leaky buckets," we see a spillage of hundreds of gigabytes or millions of personal, financial, or application records. The most recent breach targeting S3 was Sisense, where attackers pulled a secret key from a GitLab repo and pivoted to a bucket containing vast customer data.

To see a list of S3 breaches, check out the nagwww/s3-leaks repository on GitHub.

As a result of organizations losing sensitive data, AWS introduced new configurations to harden buckets, like its Public Access Block settings, making it trivial to keep data private. Those efforts have worked well in preventing open buckets (to the world), but the widespread usage of long-lived credentials and excessive privileges in the cloud has still made S3 a prime target2.

While this post doesn’t cover S3 hardening techniques, there are extensive pieces of advice in the AWS documentation.

Auditing S3 Bucket activity is a natural complement to hardening and other security controls. The type of activity ranges from configuration of buckets (management events) to object access (data events). S3 Buckets can be monitored in various mechanisms, which we will review next.

Data Sources for Monitoring S3 Bucket Activity

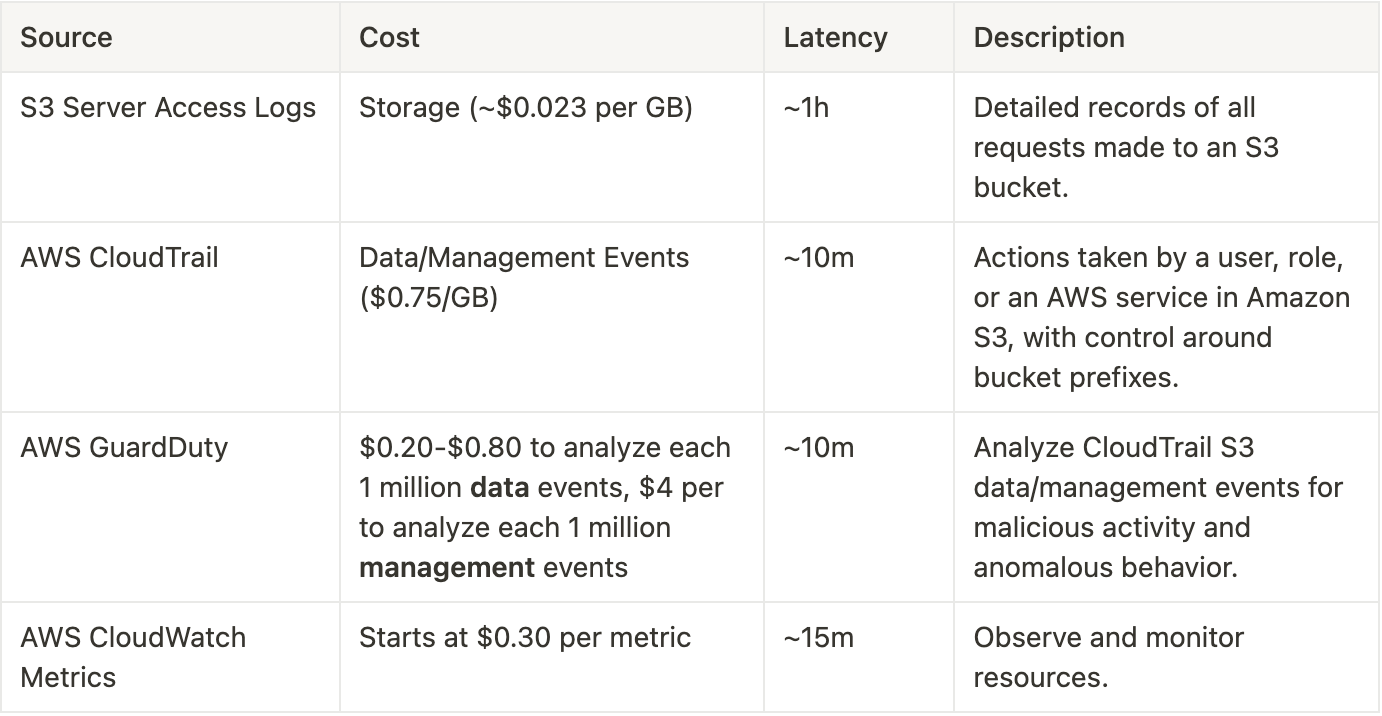

The perennial challenge with SecOps at scale is balancing the cost of a log with its utility in the SIEM. Luckily, we have several options for monitoring S3 Buckets with varying data granularity, costs, and latency3. These audit logs range in formats like comprehensive, HTTP-style requests, bucket management API calls, and anomaly detection alerts. The table below provides an overview:

S3 Server Access Logs capture all bucket and object CRUD operations as authenticated or anonymous users on the internet. These logs can answer questions about who is PUTing and GETing data from the bucket, where (IP), and how much data has been downloaded.

When S3 Server Access Logs are enabled, the S3 service will deliver the audit log of all requests to a bucket of your choice on an hourly cadence. We believe you should enable S3 Server Access logs for your production buckets holding sensitive data and control costs with data tiering.

To see an example of S3 access logs, the screenshot below shows a GitHubEnterpriseLogWriter IAM Role successfully PUTing objects to an S3 Bucket called gh-enterprise-audit-log.

While S3 Server Access Logs offer a rich and comprehensive data set for S3 activity, you can also capture similar information with CloudTrail. CloudTrail complements S3 Logs with management events like bucket creation and policy updates. Data events can also be configured to monitor the same object-level activity by selecting which bucket prefixes you want to monitor. The main benefits of collecting via CloudTrail are that configurations can be more centralized, logs are delivered with lower latency, and this data can be analyzed via GuardDuty.

AWS GuardDuty monitors various attacker tactics and techniques in CloudTrail (and other data sources) using machine learning to identify anomalous and suspicious behaviors. Ideally, these alerts identify a new API accessed, an abnormal volume of events, or a known bad IP downloading our data. These alerts can be further filtered and correlated using SIEM rules to provide additional Correlation value against other atomic rules.

Separately from GuardDuty and S3 Server Access Logs, CloudWatch metrics can also identify sharp increases in errors, number of requests, and downloaded bytes, which could indicate exfiltration or reconnaissance on your buckets. It can also aid in incident response on large datasets by identifying impact timelines to be correlated against log data.

Finally, host-based logging tools like syslog, osquery, or your EDR can log AWS CLI commands from compromised hosts, such as s3 sync, cp, and more. If attackers exploit an EC2 instance’s permission associated with an instance profile that grants access to S3, these can help tell the full story that led to data getting downloaded.

For more information, check out the AWS documentation for monitoring S3 and our prior blog on Medium about S3 Logging:

S3 Correlations

After configuring buckets, it’s time to analyze them for proactive detection. There are several ways to correlate logs from an S3 Bucket, and using guidance from MITRE, teams should:

Monitor for unusual queries originating from unexpected sources may indicate improper permissions are set and are allowing access to data. Additionally, failed attempts by a user for a certain object, followed by escalation of privileges by the same user and access to the same object, may indicate suspicious activity.4

Typical attack patterns progress from Discovery [Cloud Storage Object Discovery], where attackers map the available data to be exfiltrated, to Collection [Data from Cloud Storage], where attackers download or access the data, to Exfiltration [Over Alternative Protocol]/[Transfer Data to Cloud Account], where the data is moved into the attacker's control.

Similarly, we will typically observe Credential Access or Privilege Escalation actions taken by IAM users to obtain access to the buckets before enumeration and exfiltration. As with the Sisense breach, attackers found a valid access key in a code repository.

S3 Server Access Logs, CloudTrail Logs, or GuardDuty alerts can assist in monitoring these tactics and techniques.

Access From Unexpected Sources

Monitoring access from unexpected sources aims to identify if data access occurs from an entity we don’t expect, thus invalidating access controls. In production environments, access to an S3 bucket typically flows from a backend service in a container or virtual machine or includes IAM roles for managing infrastructure. However, if an attacker gains access to a requester that we do expect, this correlation wouldn’t be helpful, such as compromising a system with a reverse proxy and executing commands on behalf of a “trusted” production system.

To implement this correlation, a pattern-based “allow-list” designed around your internal operational logic can be used to model this behavior when analyzing S3 Access Logs. The assumed IAM Role or User can be found in the requester field of an S3 Server Access Log.

SELECT *

FROM aws_s3_server_access_logs

WHERE requester NOT LIKE 'arn:aws:sts::%:assumed-role/RoleName/%'

AND operation IN ('REST.GET.OBJECT')

GROUP BY bucketThis logic could include multiple role names, operations, user agents, and more.

The example log below shows a GET object from a theoretical log analyzer:

{

"authenticationtype": "AuthHeader",

"bucket": "enterprise-data",

"bucketowner": "9f6a2b1c3d8e4f7b9a0e5c6d7f8a9b0c1d2e3f4b",

"ciphersuite": "ECDHE-RSA-AES128-GCM-SHA256",

"hostheader": "enterprise-data.s3.us-west-2.amazonaws.com",

"hostid": "NVVgg8DDZyRRnAA4zaBGhQ=",

"httpstatus": 200,

"key": "2024/04/01/23/55/3b99da21-995a-4dde-b9b9-40f2af08e829.json.log.gz",

"objectsize": 454,

"operation": "REST.GET.OBJECT",

"remoteip": "140.82.115.46",

"requester": "arn:aws:sts::111111111111:assumed-role/EnterpriseLogReader/analyzer-lambda",

"requestid": "V9AAADAABBBBCCC8",

"requesturi": "PUT /2024/04/01/23/55/3b99da21-995a-4dde-b9b9-40f2af08e829.json.log.gz HTTP/1.1",

"signatureversion": "SigV4",

"time": "2024-04-27 23:55:15.000000000",

"tlsVersion": "TLSv1.2",

"totaltime": 44,

"turnaroundtime": 13,

"useragent": "aws-sdk-go/1.51.16 (go1.20.2; linux; amd64)"

}

GuardDuty S3 Anomalous Behavior Findings

AWS GuardDuty can complement the rule above by analyzing anomalous activity in S3 CloudTrail Data Events. The following rules work by taking baselines of IAM Roles and Users in your accounts to label an activity as “anomalous.” The links below provide insight into the behavior:

Check out the AWS Documentation for the full list of finding types.

GuardDuty can also be configured to send findings to an S3 Bucket, which can then be forwarded to your SIEM for Correlation rules.

S3 Inventory Access and S3 Downloads

When S3 Buckets get too large, AWS can run an asynchronous job behind the scenes to List all the objects and report the output to, you guessed it, another S3 Bucket. Attackers may exploit this service and the underlying Inventory data to enumerate objects in a particular bucket without running long-lived List commands. The following SQL query could be used to identify those calls.

SELECT *

FROM aws_cloudtrail

WHERE eventName IN ('GetBucketInventoryConfiguration', 'ListBucketInventoryConfigurations')

If an attacker pivots to that bucket to download data, it could indicate active Collection. Look for sequences of these behaviors.

S3 Inventory Hits -> S3 GetObject -> Grouped by ARN/requester

Additionally, a simplistic rule that looks for hundreds or thousands of downloaded files could indicate the usage of s3 sync commands.

Modification of Bucket Configurations

If an attacker obtains a valid set of credentials or successfully exploits an SSRF-style attack, they can update bucket permissions to allow cross-account access. In this attack, data could be pulled using resources owned by an attacker, enabling them to exfiltrate data. Looking for general modifications to bucket policies and logging outside of our roles used for configuration management can help flag if a compromised resource is updating buckets.

SELECT *

FROM aws_cloudtrail

WHERE eventName in ('PutBucketLogging', 'PutBucketPolicy')

AND userIdentity.arn != '<expected IAM roles>'

Attackers may also disable Server Access logging to cover up their tracks. These API calls can be monitored using typical CloudTrail Management events, which do not require a special configuration above to be enabled in your account.

GuardDuty can also detect changes to a bucket Policy with the following finding types:

Other Exfiltration Techniques

Aside from native AWS transport mechanisms, like S3, attackers may also attempt to exfiltrate data over protocols like SSH or DNS from virtual machines within your control. While GuardDuty can be handy when paired with VPC Flow and other network logs, additional methods of host and network-based monitoring can be employed to detect these styles of exfiltration.

Patching the Leaky Buckets

Understanding and monitoring our cloud environment, especially AWS S3 buckets, is crucial to securing our data. Knowing which of our buckets contain the most valuable information and who should have access to them is fundamental. Implementing in-depth monitoring strategies that balance cost and usability can significantly enhance our defenses. By staying vigilant and proactive, we can significantly reduce the risk of data breaches and ensure the safety of our data in the cloud.

Thumbnail photo by Heather McKean on Unsplash

Additional Reading

https://docs.aws.amazon.com/AmazonS3/latest/userguide/using-s3-access-logs-to-identify-requests.html

https://auth0.com/blog/guardians-of-the-cloud-automating-response-to-security-events/

https://github.com/RhinoSecurityLabs/cloudgoat/blob/master/scenarios/cloud_breach_s3/README.md

https://github.com/aws-samples/aws-customer-playbook-framework/blob/main/docs/S3_Public_Access.md